Contemplation on Compiling

Posted on January, 19 2016

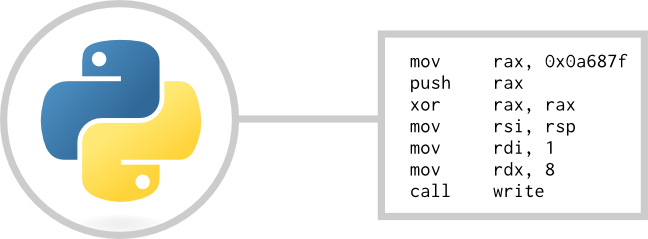

Computer programs that analyze and generate data that can be interpreted by lower-level systems (also known as compilers) are critical to any software developer out there. Few have ventured into how compilers generate the code that runs the programs they have meticulously designed, although understanding compilers and how they operate can help you understand why a computer program even has a chance to run on a piece of silicon.

Everyone who has played about with programming and had to use a compiler understands a few basic facts about what a compiler does:

-

Looks at syntactically correct code and builds an abstract tree of data representing the code

-

Iterates over built 'understanding' of the code to compile and translates into lower-level commands and subroutines

-

Links with external libraries and spits out an executable that does what the original code meant to do

Beyond that, mainstream compilers implement further steps such as many different standards and optimization for specific platforms. When going out to implement a compiler there are a myriad of things to consider for even the most simplistic compiler out there. The first step is how a compiler can translate code such as int x = 3; into its own meaning.

Lexical Analysis

A way to make a computer program understand code is using a 'Lexer', or what is known as Lexical Analysis. Put in layman's terms, this process takes a string such as int x = 3; and parses each digestible bit of information that string provides into what can be called 'tokens'. For example, on the string int x = 3; the list of tokens would be as follows:

| String | Token Type |

|---|---|

int |

Type |

x |

Variable Name |

= |

Assignment Operator |

3 |

Static Value |

; |

End of statemnet |

From this table, each string is represented as an individual 'Token Type'. Building on top of this analysis, using computational logic these arrays of tokens and strings can be classified much easier than if the string were given as is. Once a string of tokens is classified an auxiliary algorithm can apply the values provided into a set of op-codes designed for that specific purpose.

What is a variable?

The biggest concept in computer programming that one comes across is the idea of a variable. When you assign a variable, you generally intend to change its value. For this to happen, you have to know where to find the variable. This is where compilers come in. Tell a computer to find mySuperImportantVariable, and it will act very confused. A computer only knows how to find a value based on a number. But wait, you said? Your program uses human-readable words for variables? Well, no. Code uses human-friendly, easy-to-remember words for variable names. To a computer, these easy-to-remember words take a long time to find due to the amount of different names that can possibly exist given a string with the length n. Before code is compiled into an executable program, the compiler must translate these easy to remember names into specific addresses in some region of memory. For this to happen, the compiler must know how to translate these names into addresses.

Translating human readable names into addresses boils down to counting ducks. For each instantiated variable, depending on scope, will be assigned a unique identifier that, for all intensive purposes, can just be a number. Each variable in the run-time environment would be just an integer pointing to a location in memory, whether it is a function, object, or a fundamental data type.

Concluding thoughts

Each and every program ever ran uses a compiler (or assembler) to translate human-friendly syntax into the simplistic instructions that computers can understand.

One good reading into simple compilers is Let's build a compiler, by Jack Crenshaw. It covers the concepts required to make a simplistic compiler and a basic programming language.